1. Concept

The first thing you should always start with during a project is setting aside enough time to concept and gather proper reference.

When creating an environment such as this, you don’t want to pick a concept that is overly complicated or outside of your skillset. At the same time, something too basic or uninteresting might not grab the attention you want, or may not challenge you enough in the process. I always feel like at the end of a project, you should come out of it with new knowledge.

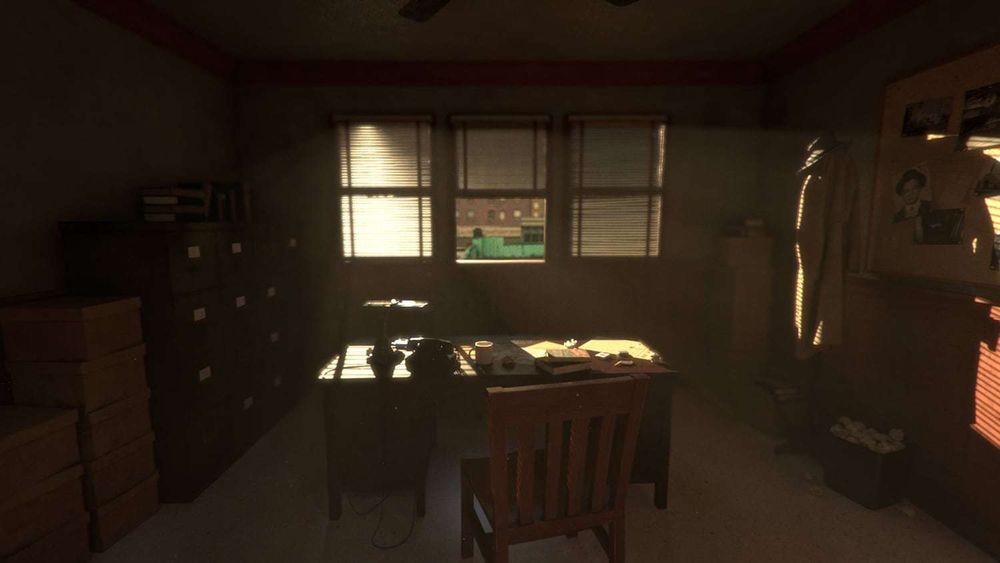

The concept I ended up choosing at the end of the day was a P.I’s office set in the 1940’s, a good mix of varied materials, enough props to fill the room without looking overly cluttered or too sparse and a few different lighting ideas I could use to set the mood.

2. Reference, Block-out & Modelling

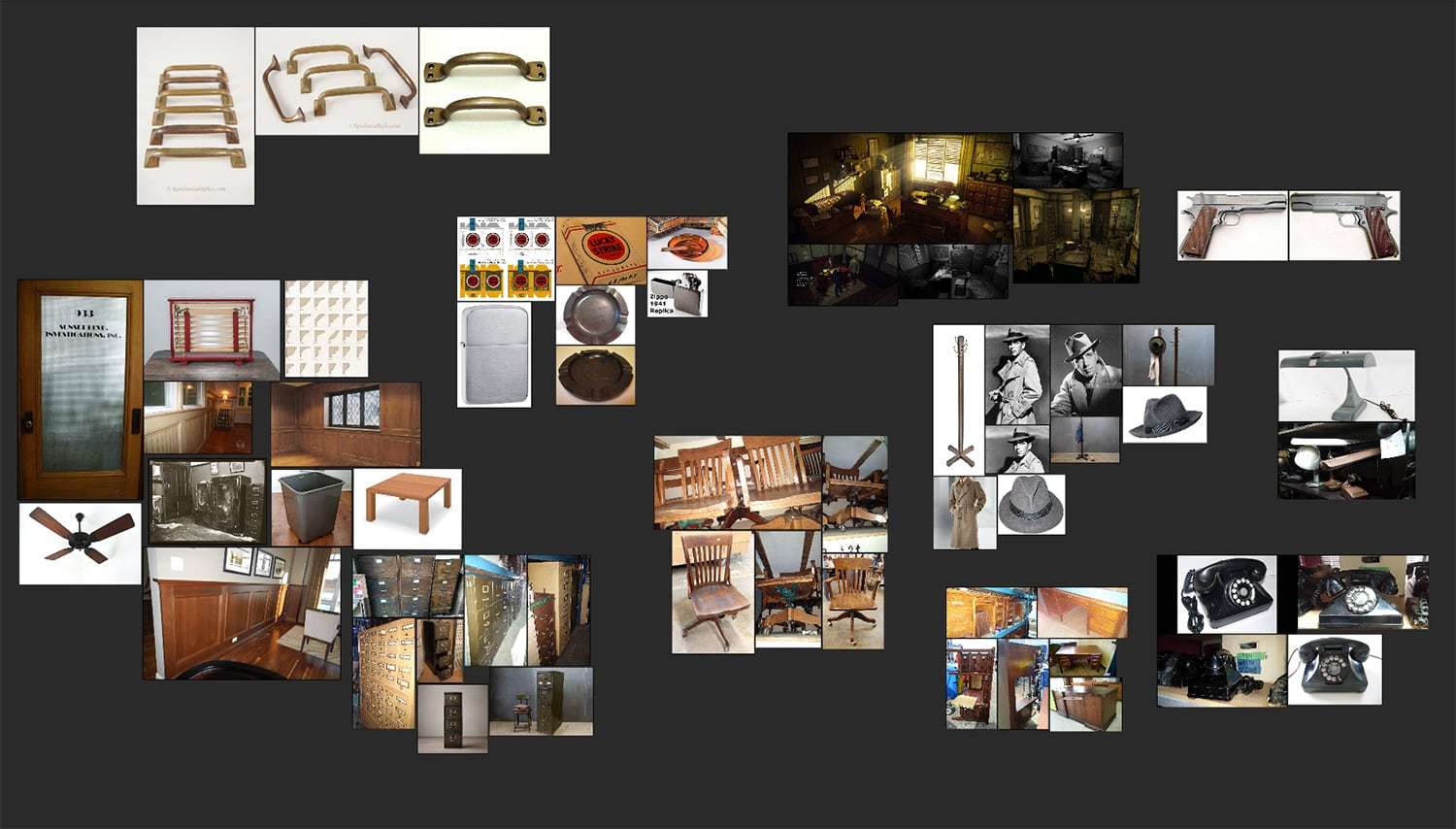

When gathering reference, I used a variety of sources that were at my disposal. I visited a local prop shop to snap reference photos, immersed myself in media involving that time period of the 1940’s (Including a quick perusal of Rockstar’s “L.A Noire” and a few movies such as “LA Confidential” and “Casablanca”) and of course, images on the internet. PureRef (Pictured below) helped me out immensely in gathering all my images and concepts on one giant virtual pin-board, and I kept it open on my second monitor at all times.

Everything was modelled to real world scale for proper accuracy.

Once reference was gathered and I pinned down the props I wanted in my scene, to block out the room and the cameras I needed. Blocking in camera shots at this point was needed to determine which objects/areas of my scene were of more importance, and allowed me to prioritise certain props over others. For example, two of my camera shots take you very close to the desk and its props, whereas the back area near the windows and the door are quite far away from the camera.

Therefore, the desk and the objects that populated it were to be allotted more time in my schedule than the filing cabinets, books and table situated in the background.

Lighting has such a large impact on mood, so the decisions I made early onset the basis for everything that followed.

I didn’t spend too much time on this process, I used quick model passes of the props to lay out the scene and its cameras. Everything was modelled to real world scale for proper accuracy. Once this staging was done, I UV unwrapped every object and moved on to proper modelling on the “block out objects.”

Once modelling was finished, I applied basic shaders to everything, with simple colours and correct reflective properties. The image below is an early test render with temporary lighting and basic test shaders.

I blocked in my lighting at this stage and did a few test renders. The purpose of this was to start setting the scene and the overall feel I wanted in the end product. Lighting has such a large impact on mood, so the decisions I made early onset the basis for everything that followed. Once everything was looking like I wanted it to, I moved on to texturing.

3. Texturing & Shading

For the texturing stage, I used a combination of different software, including Mari, Substance Painter and Substance Designer.

Substance Designer was used to create a base for quite a few of my props, such as the filing cabinets, the floor and the desk lamp. This allowed me to have complete control over the look of the texture, the large scale colour variation, large details, etc. and using this I created simple procedural bases to paint overtop of in Mari.

One very important thing I always keep in mind is the story and mood of the overall environment.

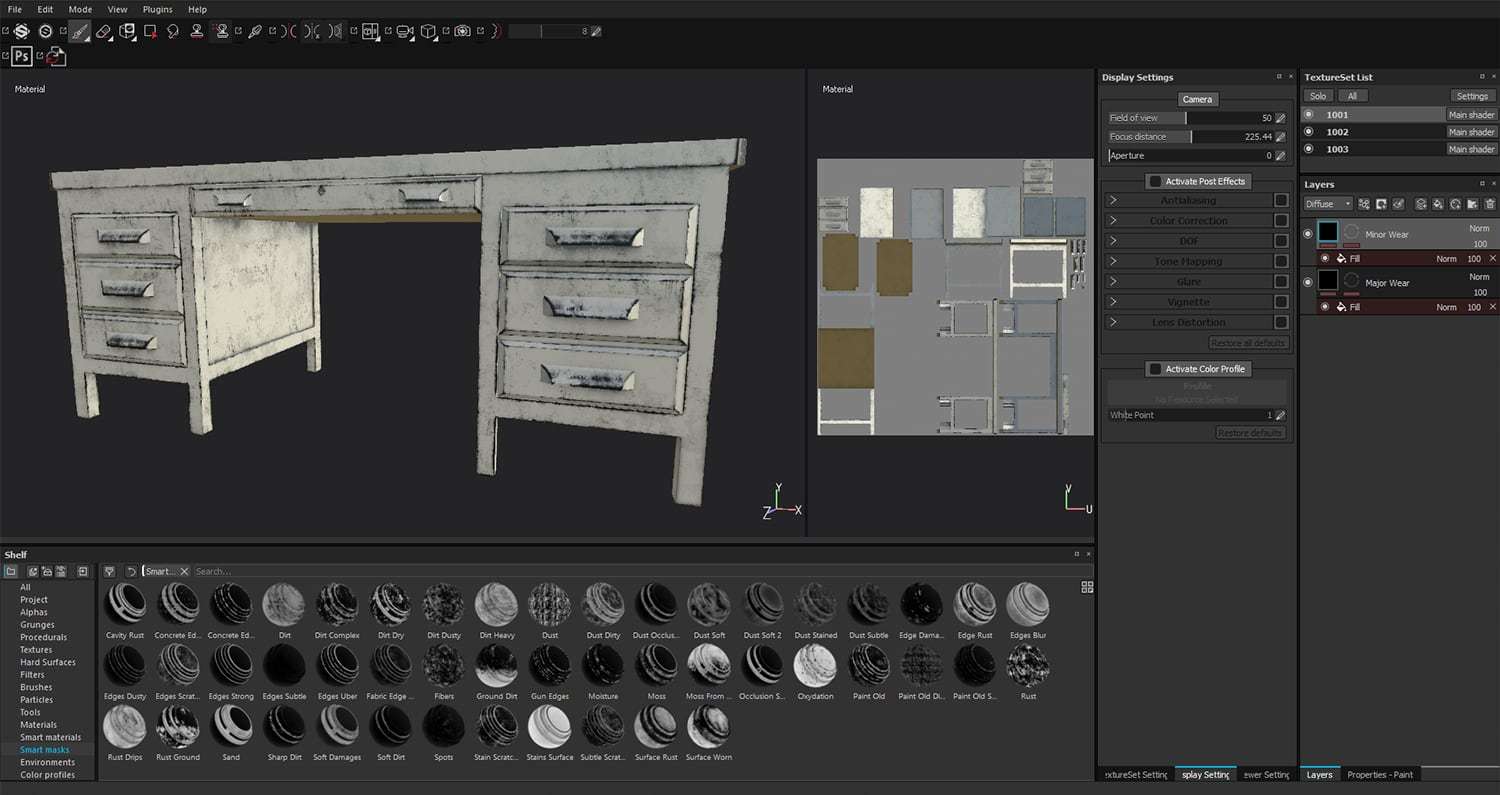

Once I finished in Substance Designer, I moved on to Substance Painter to create all the masks I needed for use in Mari. By baking out the different channels in Substance, I was able to create masks for grunge, dust, rust, scratches, edge wear, etc.

I was able to generate anything and everything I needed for each and every object. However, many of the masks generated were too similar to one another if I used them on multiple objects, or too “perfect” if used as is (i.e. Edge wear perfectly alongside all 4 edges of the desk). So, once I had all the masks I needed for a particular object, I exported them out and brought everything into Mari to customise from there.

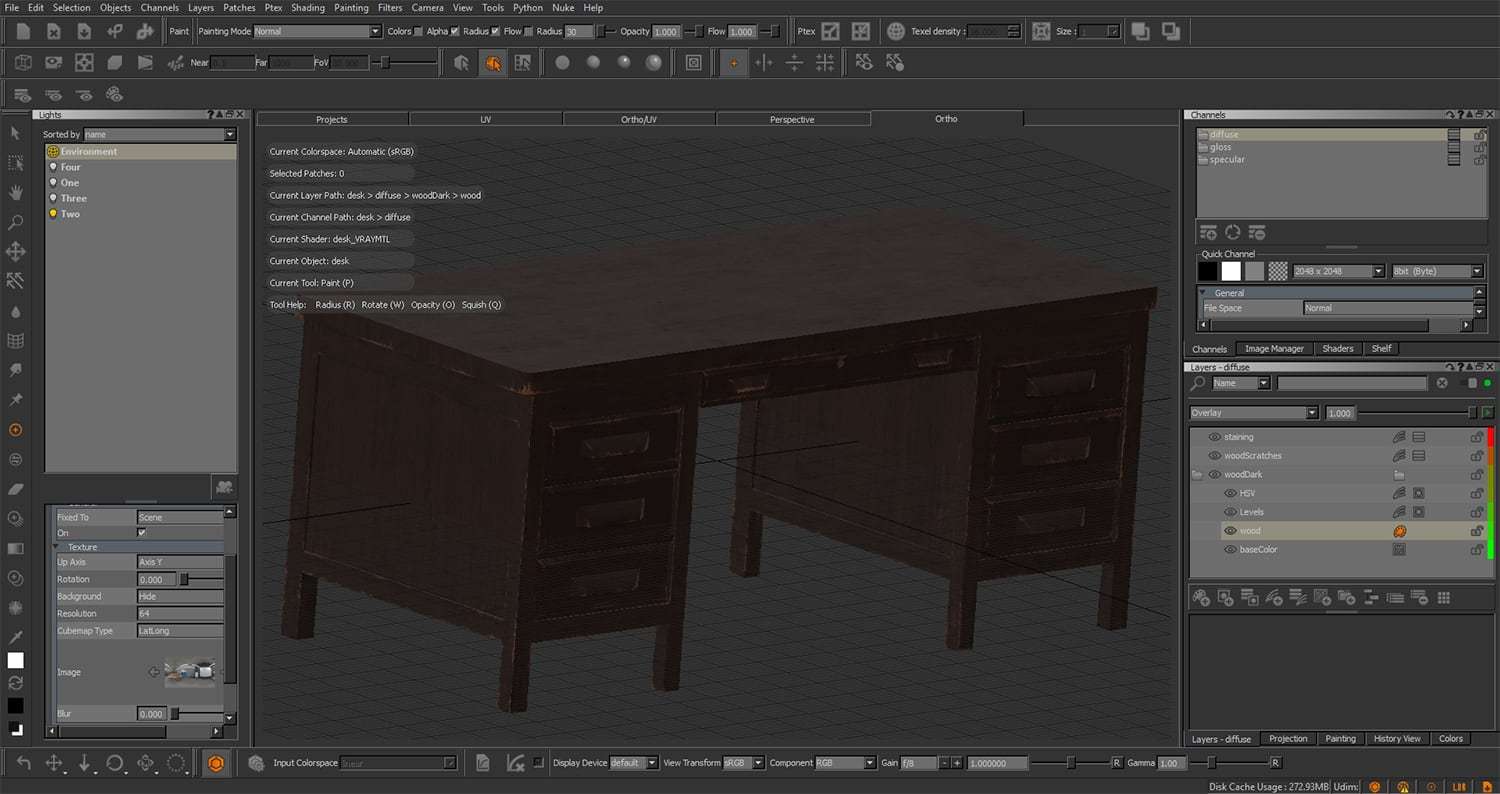

Once the preparation was done in Substance, I used Mari for the remainder of my texturing work. I started out by painting the Diffuse channel on all of my props, starting with the large scale and working my way down (ex. Walls > Desk > Chairs > Gun). One very important thing I always keep in mind is the story and mood of the overall environment.

Is the tenant of the office clean and organised, or messy and unkempt? Is the building new? Old? Well-maintained?

Maybe the tenant rests his shoes on his desk frequently and leaves scuff marks on the edge? Maybe he slides his chair back into the wall more frequently than he should and, in return, ends up leaving marks on the wall.

Perhaps he’s in the middle of a breakthrough on a case, leaving his office in disarray with scattered notes, strung up theories and case files?

All of these little details are things you may not think of as being noticeable on a grand scale, but they all come together to help tell a story and make the scene that much more interesting.

Once the diffuse maps were painted out, it was fairly easy to continue on with the Specular, Gloss and Bump channels. I used the diffuse maps as a base and proceeded from there, keeping in mind proper specular/reflective properties, and using V-Ray materials in Mari with a simple studio setup HDRI to check my work. I followed these online documents during my process and they greatly helped me along.

VISCORBEL Vray Materials Theory

PBR Rendering: Meant for metallic/roughness workflow, but can be adapted for spec/gloss workflow as well

IoR List : There’s a section specifically for 3D artists to help pinpoint the perfect Refraction IoR for a particular material

Vray for Maya documentation by Chaos Group

Once all the channels (Diffuse, Spec, Gloss and Bump) were completed for every object, it was time to bring them into Maya and begin the final lighting/rendering tweaks before bringing everything into Nuke.

4. Lighting, Rendering & Final Tweaks

Lighting in film and games has always fascinated me. The same exact scene can be changed in so many ways just by tweaking the lighting setup. A bright and colourful atmosphere with the sun high in the sky can give a feeling of happiness and joy, dazzling you with vibrant colours and strong contrast between light and shadow.

On the contrary, a dark and desaturated atmosphere can give the same environment a feeling of unease and doubt, chilling you with pitch black shadows and a cold blue overcast. I had two amazing books to help me along during this process, and I would recommend that every aspiring lighter own both of these: Light for Visual Artists and Color and Light: A Guide for the Realist Painter.

During the block out stage, I used a simple Vray Sun & Sky for the outside light source, and Vray Sphere lights for the interior hallway lights. This was a simple way for me to create a dusty, warm interior, meant to feel worn out and aged, with a contrast between the bright, golden hour sun outside and the cold, fluorescent lighting from the hallway just outside the door.

Once I got the mood I wanted, I replaced the Vray Sun & Sky with a Vray Area Light and Vray Dome light with a chosen HDRI plugged into it. This gave me more control over the lighting setup so I could tweak it to my liking, and allowed me to add both lights to their respective render passes.

I duplicated the scene onto a separate render layer in Maya and added in VrayEnvironmentFog to better accentuate the sun and give the room that dusty, hazy feeling I was looking for. Environment fog isn’t meant to be used heavily, just a small amount gave me the effect I was looking for. Too much environment fog and I risked increasing render times and hazing out the scene. The beauty render on this layer was rendered out separately as a render pass for use in Nuke (partially for individual control, and partially to optimise render times).

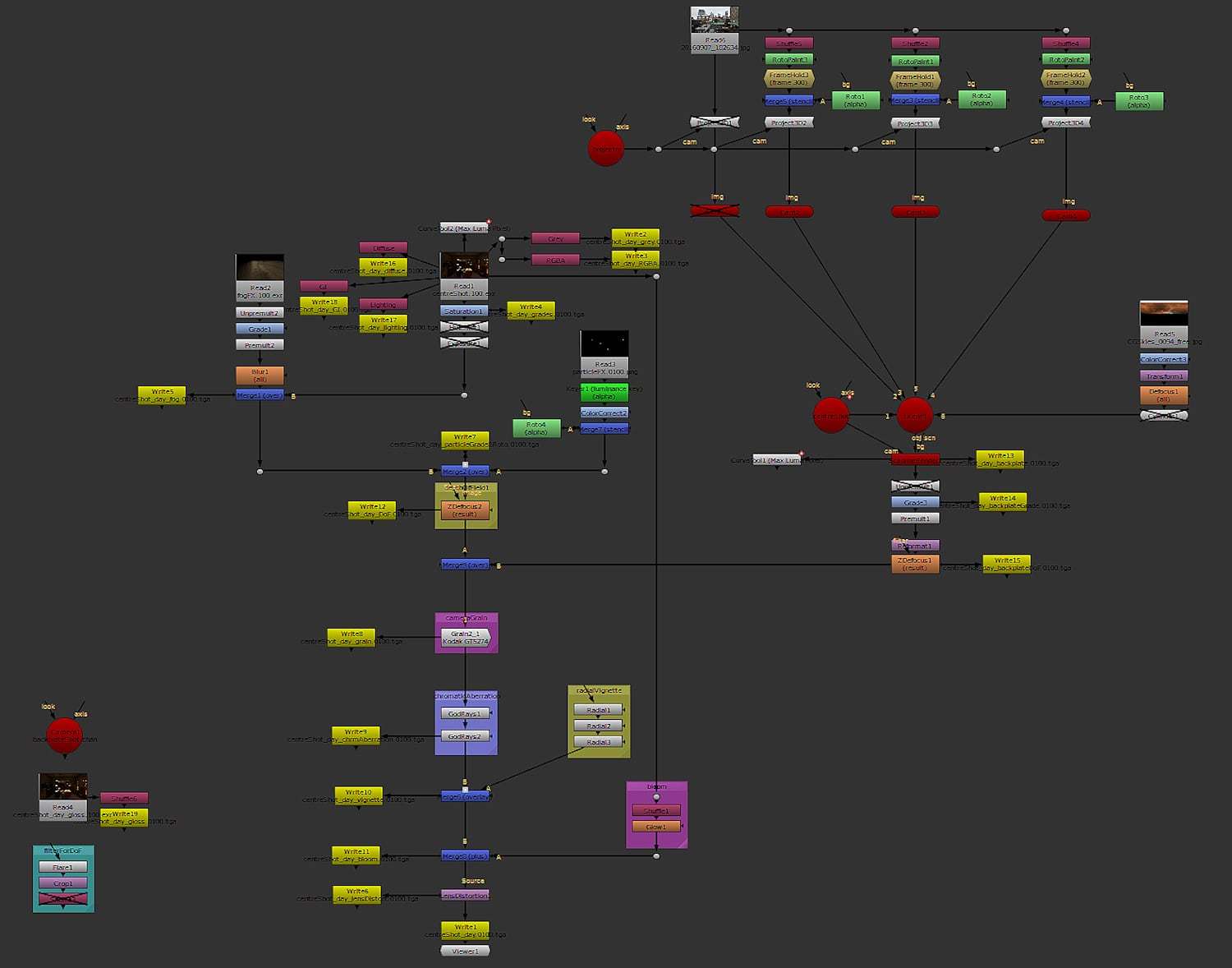

Back on the original Master Layer, I rendered out everything into their own respective passes (diffuse, lighting, gi, reflections, zDepth, etc.) to allow me full control in the compositing stage in Nuke. The lights were also added to their respective “Light Select” passes, one pass for each light, so that if I needed to tweak the lighting colour or intensity, I could do it all in the comp stage without needing to return to Maya and re-render.

When rendering, I output everything into Multichannel EXRs, allowing all of the render passes to be stored into one image file. They’re hefty file sizes (1 frame took up 250mb on my hard drive, with entire shots taking up to 80gb of space!) but well worth it for the organisation. Once the shots were rendered out, all the passes compiled, and I was sure I had everything I needed, it was time for compositing and piecing everything together for the final product.

5. Compositing

Compositing is an extremely important final touch that I believe shouldn’t be skipped over in any personal project, much less a professional project, as it can bring out the realism you just can’t achieve in renders alone. You can mask out mistakes or small issues in the scene, or even just make slight adjustments that would require hours of re-rendering if done through Maya and Vray.

The bulk of my compositing was done in Nuke, with the final reel itself compiled in After Effects. Though each shot required its own unique tweaks in colour, fog strength, particle intensity, etc. I’ll use my shot with the backplate as an example on how I approached the integration, grading, and comp stages.

I started out by bringing in my beauty pass from Maya and tweaking the colours to my liking. I brought in the fog next, appropriately graded it until I achieved the effect I desired and applied a quick blur to fix the noise that came out of my render (It was easier to render at lower settings and blur it in comp than rendering it out in high quality in Maya). I realised at this point I needed dust particles for that added atmospheric effect, which I had been trying to achieve for weeks using Maya and nParticles. Unfortunately, Maya just wasn’t giving me the results I needed on the deadline I was working, so I decided to do some research on other methods to achieve the effect I was looking for.

I realised at this point I needed dust particles for that added atmospheric effect, which I had been trying to achieve for weeks using Maya and nParticles.Unfortunately, Maya just wasn’t giving me the results I needed on the deadline I was working, so I decided to do some research on other methods to achieve the effect I was looking for.

Unfortunately, Maya just wasn’t giving me the results I needed on the deadline I was working, so I decided to do some research on other methods to achieve the effect I was looking for.

I ended up using Unreal Engine to generate the particles, and did some comp and roto work in Nuke to achieve the depth/realism I was looking for! I won’t go too in-depth to this topic, but I mostly figured it out with some help from the Game Environment students at school, online documentation, and breaking apart the example scenes included in Unreal Engine.

Once the dust was looking presentable, I used the zDefocus node for some Depth of Field using the zDepth pass from Vray. I comped the backplate in and added some final details to add to the realism. I overlaid some camera grain, chromatic aberration, a slight vignette, bloom, and lens distortion.

All of these are very minor details that one may not notice with a quick glance over a photograph or a render, but they all come together to produce an image that we perceive as being “realistic.” These are aspects that we might not pick up instantly, but if they’re not included, your mind may feel as if something is off, and perceive the image as being fake.

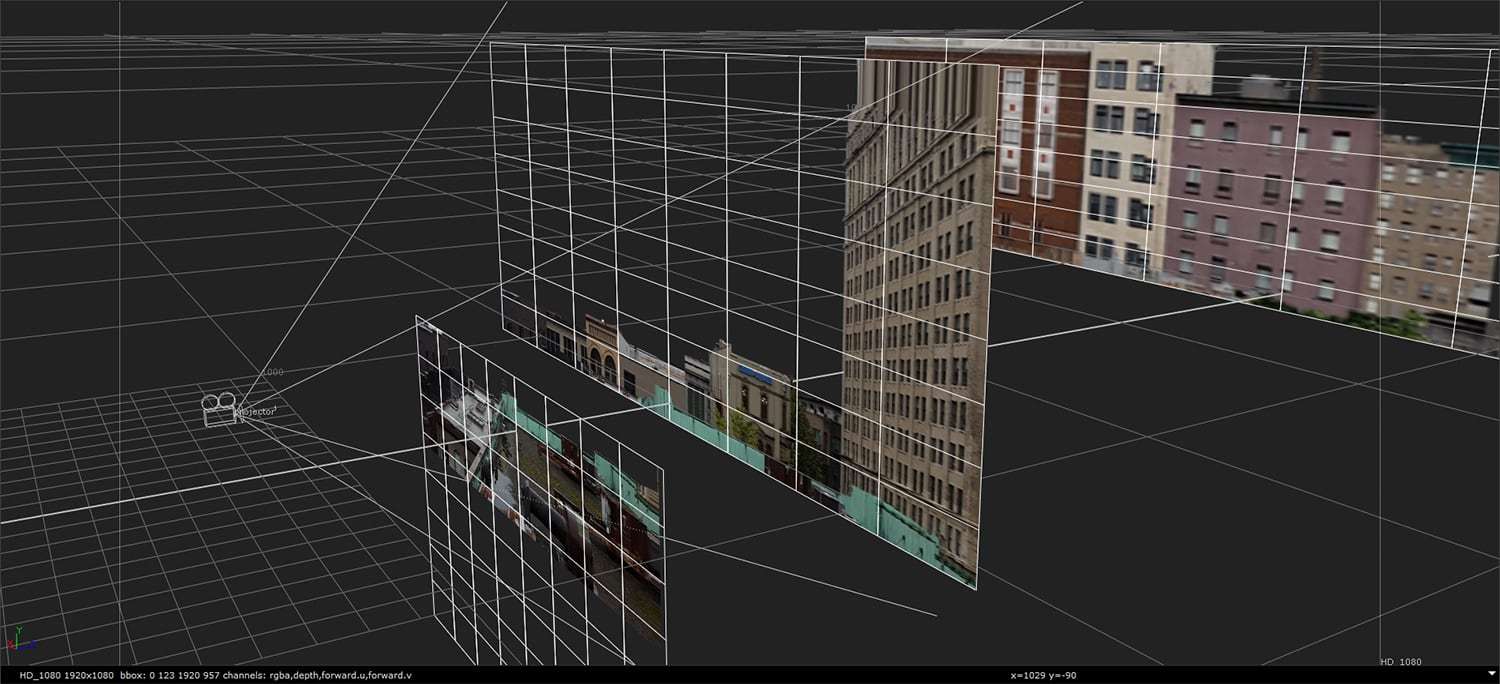

As for the backplate, I took some trips to old sections of Vancouver (Gastown, East Side, anything with heritage buildings) and Seattle (Pioneer Square) to snap some photos that could be suitable for a backdrop set in the 40’s. I made sure to take these photos during an overcast day, to minimise the amount of shadow/light so that I could add it all myself once I projected the image in Nuke.

Once I had a photograph I liked, I brought it into Photoshop and painted out anything that would stand out too much (New signs, trees, modern building interiors, etc.). Taking the edited photo into Nuke, I projected it onto cards for the foreground, mid-ground, and background.

I prepped a sky for the background, and it turned out I didn’t actually need it as it didn’t show up out the window in any of my renders, but I kept it aside anyways, just for future use if needed.

Once the cards were ready and the parallax was corrected by repositioning the cards as needed, I graded the backplate, making sure to match the blackpoints/whitepoints of both the backplate and the render. This is the main reason why an image might not quite integrate with a CG object/environment, so it’s important that the blackpoints/whitepoints are properly matched.

I was able to find out these values by using a CurveTool attached to the backplate and the render and finding the MaxLumaData values it gave me, which in turn I used in the Grade node. I then used a reformat node to match it to my render output size (1080p), defocused it a little bit, and merged it underneath my render.

Once everything was properly comped, I rendered my Nuke script out into TGA sequences, brought them into After Effects, added breakdowns and music, and ta-da! My demo reel was complete!