I’m a Visual Effects Artist with a strong background in Fine Arts. Although my profession was in Art Restoration, I practiced many different techniques, including oil painting, watercolor, drawing, mosaic, and fresco. At the Gnomon School of Visual Effects, Games + Animation, I got my second major as a 3D Generalist.

Now, as a prominent Visual Effects Artist, I have contributed to some of the most well-known productions in the nation, such as Black-ish, The Walking Dead, and Arrested Development; programs that are not only hailed by the general public, but critically acclaimed as some of the most outstanding in the industry, as demonstrated by the Primetime Emmy, Golden Globe, Writers Guild of America and other notable awards these shows have received.

I have also worked on feature films of many renowned and lauded directors and producers including The Best of Enemies, directed and executive produced by Robin Bissell (The Hunger Games, Seabiscuit) and starring Academy-Award nominee Taraji P. Henson and Academy Award winner Sam Rockwell.

I have lended my abilities to some of the largest video game projects ever released, the most notable of which is Destiny 2 Forsaken cinematic trailer Last Stand. Additionally, I took on a challenging role as Visual Effects Artist for the production of Grammy-winner Imagine Dragons’ hit single “Zero”, written for Walt Disney Animation Studios’ major feature film, Ralph Breaks the Internet. The globally-recognized single was played on Zane Lowe’s World Record on the Apple Music’s Beats 1 show, and the video has amassed more than 15 million views on YouTube.

Lost Fish

The idea for this project was inspired by a beautiful concept by Sergey Kolesov. I wanted to bring the still image of the mermaid to life. From there, the idea evolved towards creating an animated short to tell the story of the "Lost Fish."

My goal for the mermaid scene was to showcase my generalist skill set. I tried to implement all aspects of the cinematic pipeline in this work, such as: modeling, sculpting, shading, texturing, lighting, rigging, animation, simulation, rendering, compositing. As a result I made an animation sequence.

In this article I am going to show my workflow of creating a sequence from start to finish. I’ll provide some tips on how to speed up your process and get a better result in the end.

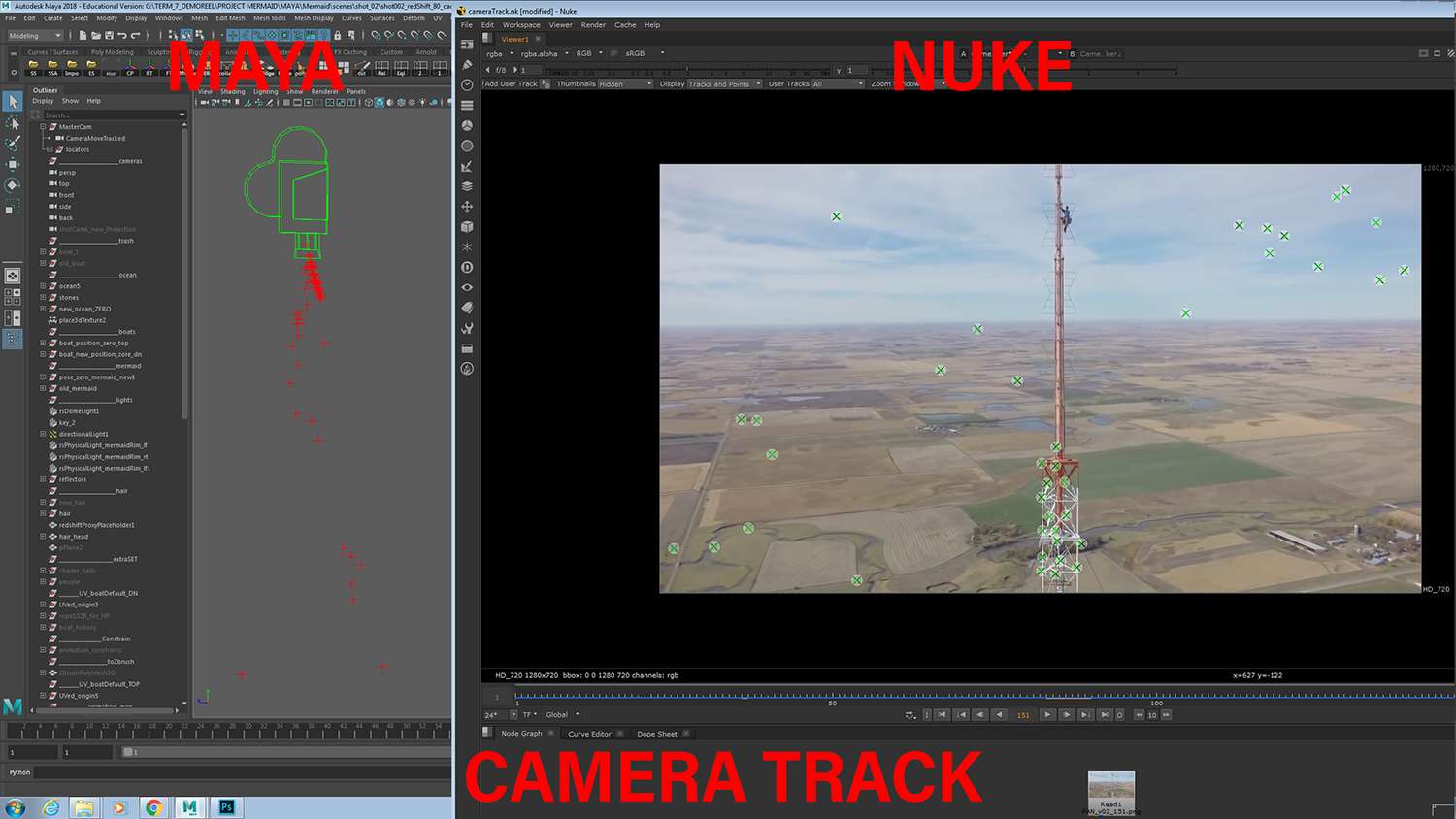

The main software that I worked with is Autodesk Maya, render - Redshift. Additional softwares used for the project are: Houdini, ZBrush, Mudbox, Mari, Substance, Adobe Photoshop, Xgen, Nuke, Adobe After Effects, Adobe Premier.

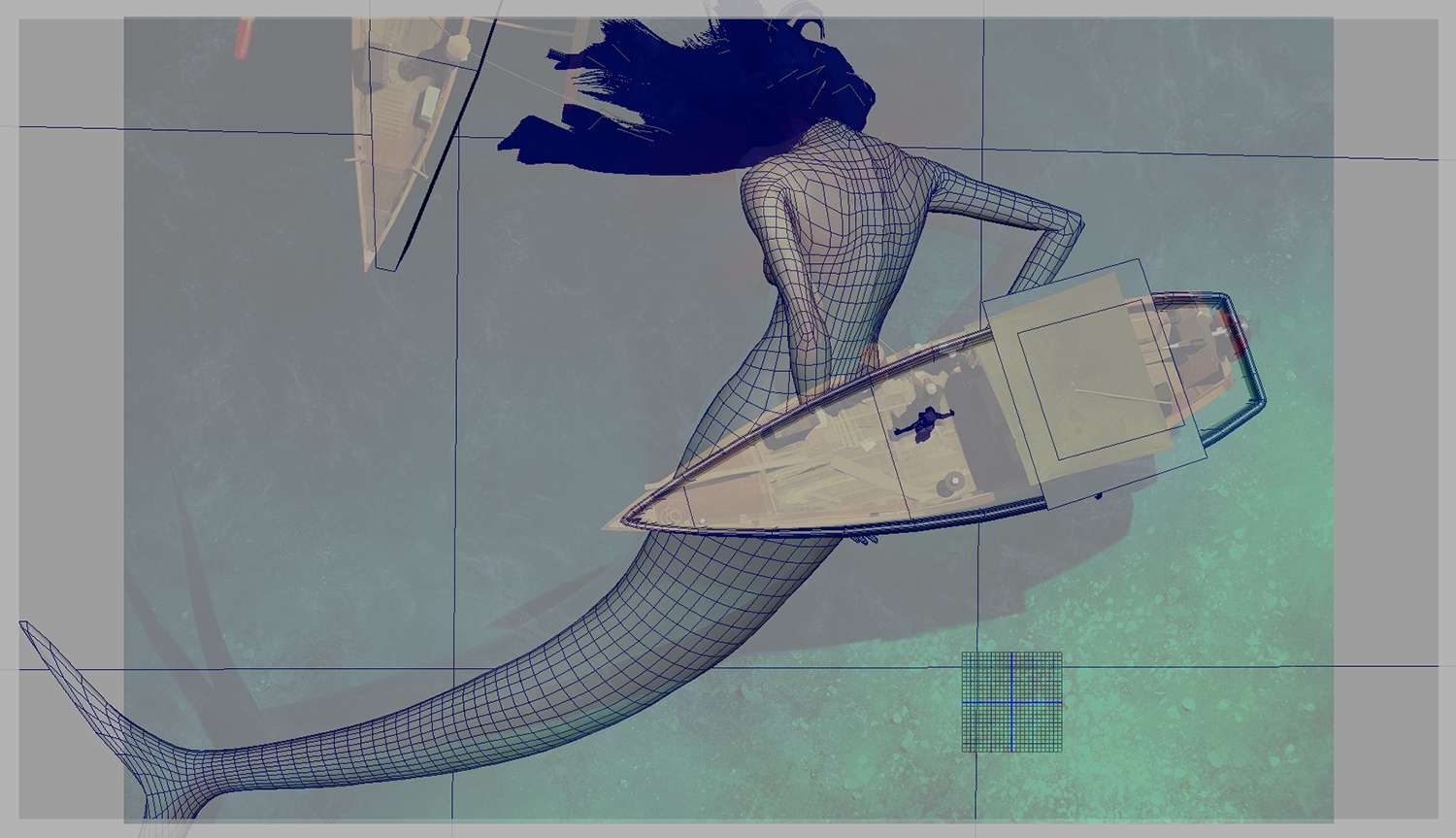

Usually my process starts with blocking out the main composition and modeling in Maya, trying my best to match the concept. Because the image is static and I wanted the project to be an animated sequence, I had to decide on the characteristics that every object or hero would have.

I decided to go for almost a slow-motion feeling. The mermaid is being unconscious while everything around her is ever so slightly moving, creating a deceivingly peaceful impression. After you start animating you scene, the concept becomes more like an example of composition, you don't have to line up every object perfectly.

Here are some of my tricks I usually do to move faster with the project!

First, I try to bring renders to Nuke as fast as possible, so that I can control what the image would look like overall and decide on which parts might need more work, which need less. This way I can distribute my time more efficiently.

Second, if I do an animated sequence, I try to make everything move as fast as possible and see it in a playblast, so I can lock down composition and timing in the beginning stages of the projects. Plus, I can trouble shoot all of the complications with any animation/simulation while I am still working with gray shaded models.

Lastly, in my experience, rendering in Redshift takes much less time, and I get a much cleaner result, especially in refraction noise. This moment obviously is very important for my project, where the biggest part of this project is water.

I created a cinematic color correction, to emphasize the story and the mood that I wanted to bring in my image.

In this specific project, transformation of the still image to the moving sequence was one of the most time consuming parts, but also one of the most important ones. Here are step by step guide that I established while working on the mermaid scene:

1. I started from the camera animation. I used a tracked camera data from real drone footage. The imperfect camera move from the real footage made the shot feel more realistic and organic.

2. The ocean simulation was created in Houdini using the small ocean set up. The ocean was brought to Maya as an alembic file. The most important parts for the ocean were speed and scale of waves.

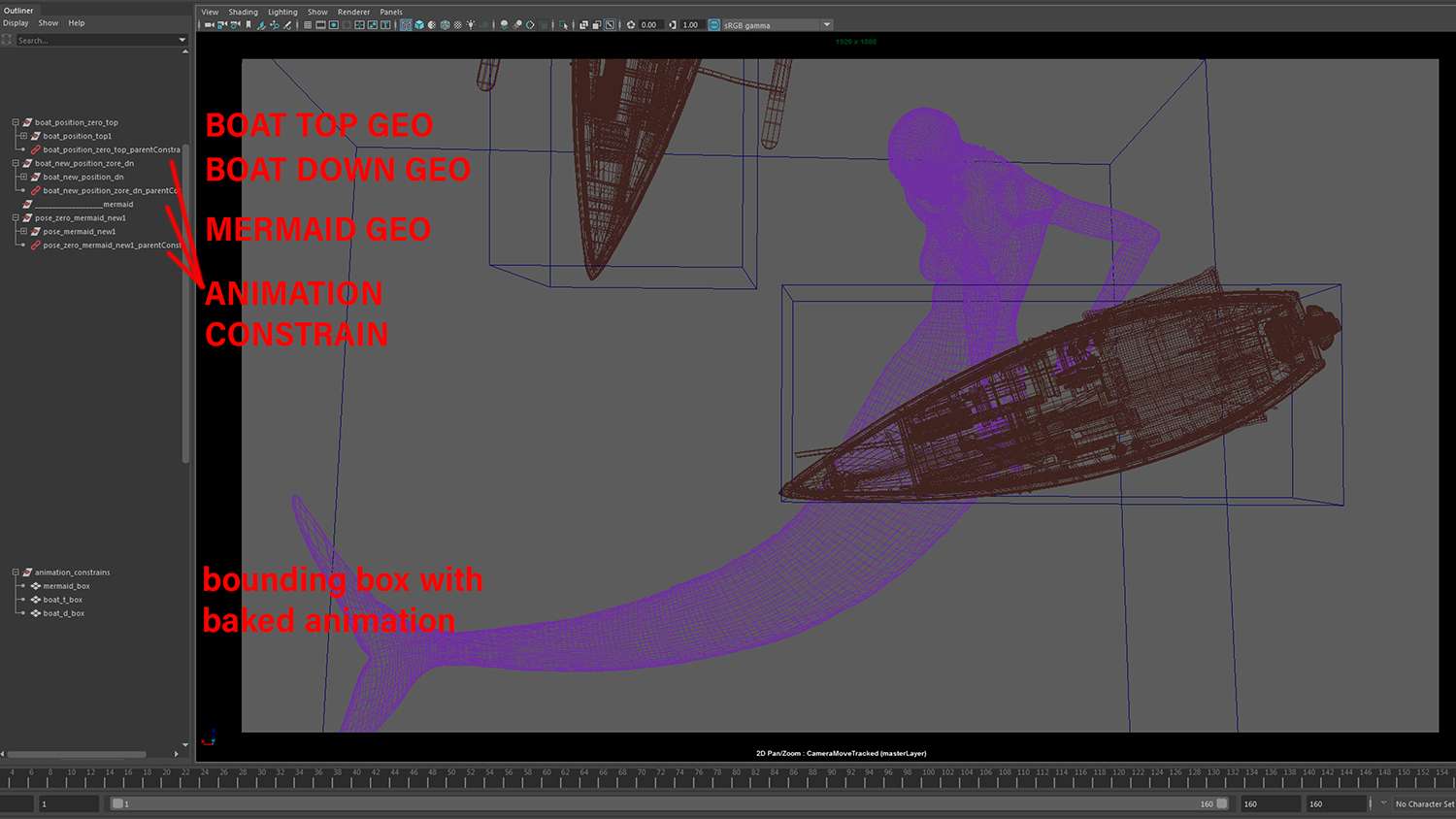

3. The floating animation for the mermaid and the boats were created in Houdini, as well, using a cosine equation script, performed on bounding boxes. After that, the bounding boxes with baked animation were brought to Maya, where rendered geo was parent constrained to those boxes. This way rendered geo could be edited or changed at any moment, without redoing the animation from scratch every time.

4. The tail of the mermaid was rigged and animated separately, on top of the floating animation. The objects that get rigged and animated always need to have proper topology.

5. The mermaid's hair was created with xGen Interactive Groom in Maya, converted to poly and exported to Houdini, where the geo was animated. It was brought back as a Redshift proxy, which was very helpful with keeping the scene light. Proxy just brought a bounding box into a scene instead of a high poly geo. Hair proxy needed to be parent constrained to the same floating box animation, for it to follow the main mermaid geo.

7. The fishermen's animation was exported from Mixamo and re-timed accordingly in Maya.

8. Rope geo was rigged in the way, that one end followed the fisherman's animation, but another followed the mermaid's, without stretching and deformations.

9. The fabric on top of the boat and a small fish were animated in Houdini, using the same cosine equation script.

10. The blood was done and rendered separately, and later composed onto the final render in Nuke.

In parallel with settling down all of the animation aspects, I worked on high poly modeling, sculpting and texturing. Most of my textures were done in Substance painter, except for the mermaid. Her textures were done in Mari.

The next step in my work flow was lookdev and lighting. In order for the lighting to be more interesting, I created multiple reflectors with different colors and positioned them around main geo for it to have a lot of different reflections and to emphasize the story aspect and the shape of the GEO.

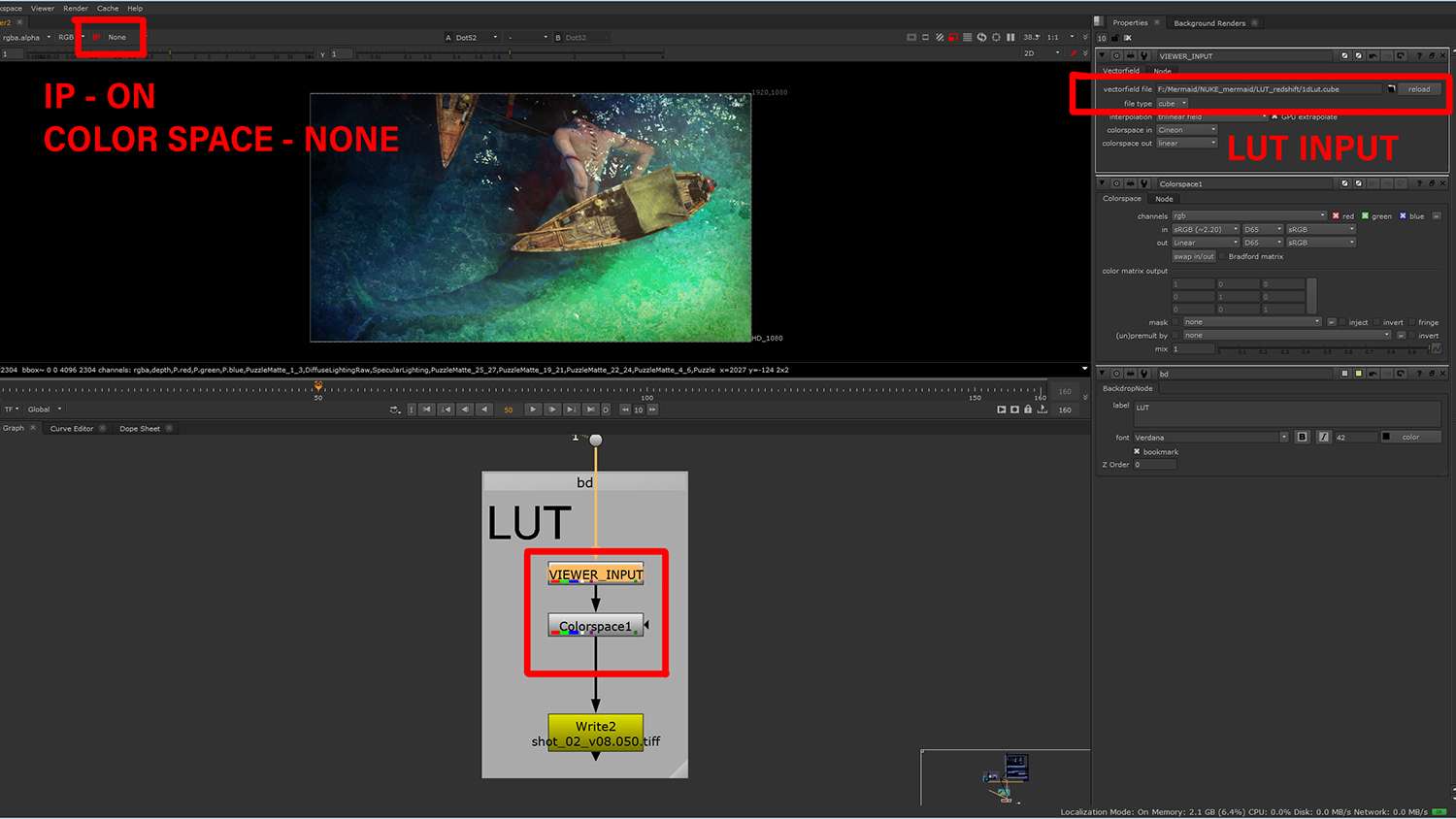

For the image to be more realistic, I used LUT while previewing renders in Maya with Redshift. LUT is a pre-created color correction set up, which adds a color adjustment curve on the image. LUT does not bake the information on the renders, so I used the same LUT in Nuke to preview and render the final sequence.

During the whole process, I was constantly going back and forth from rendering in Maya to working with renders to Nuke, creating a compositing network. This lead me to the last part of my workflow.

My compositing process started from rebuilding the beauty pass, adjusting colors, tonality, reflectivity and so on. In order to be able to do this, all my renders had the render passes baked in EXR multichannel format.

Moreover, I had Multi Mattes for every object or group of the same objects in the scene. In case of the boat, I had multi mattes for each unique material group. By doing that, I had full control over my renders in post work.

After I was satisfied with how all of the objects looked together in the image, I created a cinematic color correction, to emphasize the story and the mood that I wanted to bring in my image. The last step was to apply photorealistic elements on top of my render, such as: light rays, motion blur, depth of field, noise, vignette.

Thank you. Hope you enjoy the article and learned something new.